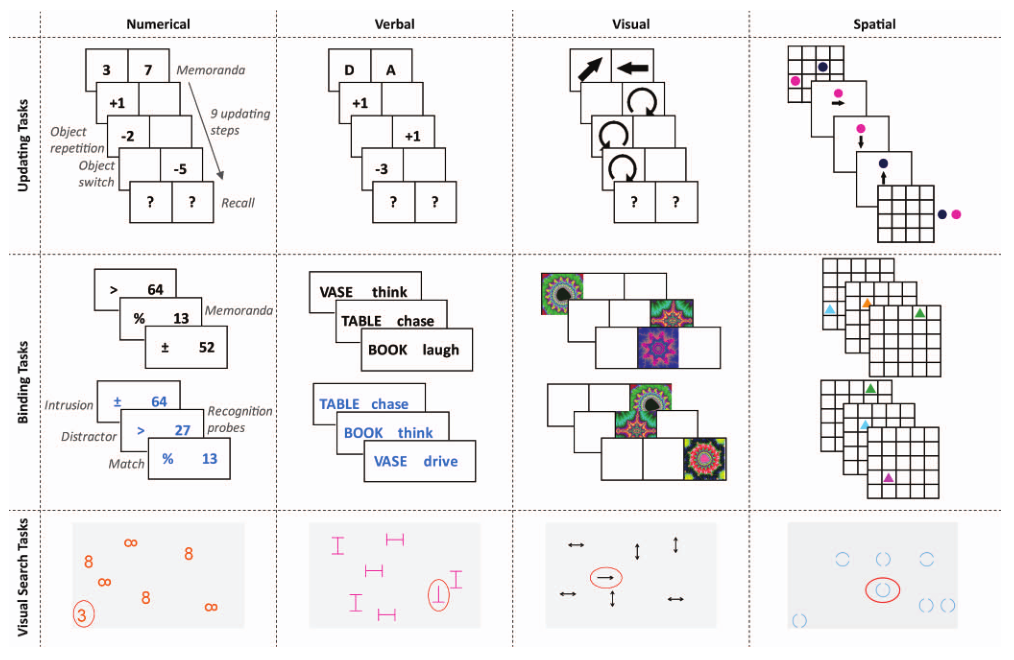

class: center, middle, inverse, title-slide # Statistical Modeling in R ## The Basics ### Henrik Singmann (University of Warwick)<br/>Twitter: <a href='https://twitter.com/HenrikSingmann'><span class="citation">@HenrikSingmann</span></a> ### Jul 2019 --- class: inline-grey # Summary: Analysis with Statistical Models in R 1. Identify probability distribution of data (more correct: of residuals/conditional distribution) 2. Make sure variables are of correct type via `str()` or `tibble::glimpse()` 3. Set appropriate contrasts (orthogonal contrasts if model includes interaction): `afex::set_sum_contrasts()` 4. Describe statistical model using `formula` 4. Fit model: pass `formula` and `data.frame` to corresponding modeling function (e.g., `lm()`, `glm()`) 4. Check model fit (e.g., inspect residuals) 5. Test terms (i.e., main effects and interactions): Pass fitted model to `car::Anova()` 7. Follow-up tests: - Estimated marginal means: Pass fitted model to `emmeans::emmeans()` (formerly:`lsmeans::lsmeans()`) - Specify specific contrasts on estimated marginal means (e.g., `contrast()`, `pairs()`) `afex` combines correct coding (3.), fitting (5.) and testing (7.): - ANOVAs: `afex::aov_car()`, `afex::aov_ez()`, or `afex::aov_4()` - (Generalized) linear mixed-effects models: `afex::mixed()` --- # Overview: Part I - Statistical Modeling with `lm` (no mixed-model) - Model setup and model formulas - Continuous versus categorical covariates - `model.matrix()` and factor codings. - Categorical covariates and interactions -- - Tests of Model Terms/Effects with `car::Anova()` - Follow-up Tests with `emmeans` -- - ANOVAs with `afex` -- - Problem with Repeated-Measures: IID assumption - Solution: Different ways of *pooling* - Pooling: worked examples --- # Statistical Model From [Wikipedia](https://en.wikipedia.org/wiki/Statistical_model) (emphasis added): > A statistical model is a class of mathematical model, which embodies a set of assumptions concerning the generation of some sample data, and similar data from a larger population. A statistical model represents, often in considerably idealized form, the **data-generating process**. > The assumptions embodied by a statistical model describe a set of **probability distributions**, some of which are assumed to adequately approximate the distribution from which a particular data set is sampled. The probability distributions inherent in statistical models are what distinguishes statistical models from other, non-statistical, mathematical models. > A statistical model is usually specified by mathematical equations that relate one or more random variables and possibly other non-random variables. As such, "a model is a formal representation of a theory" (Herman Ader quoting Kenneth Bollen). > All statistical hypothesis tests and all statistical estimators are derived from statistical models. More generally, statistical models are part of the foundation of statistical inference. --- class: small # Some Example Data: De Simoni & von Bastian (2018, JEP:G) - Partial data from working memory training study (20 sessions over 5 weeks), post training measurement - 197 participants - 2 testing orders - 3 training groups - 3 continuous variables, each is average of 4 tasks: - accuracy in working memory updating task - `\(d'\)` (d-prime) working memory binding task (pair recognition) - accuracy in 4 different reasoning tasks (diagramming relationships, letter sets, location sets, Raven matrices) -- ```r library("tidyverse") load("ds_vb_18.rda") ## or: load(url("http://singmann.org/download/r/ds_vb_18.rda")) summary(ds_vb_18) ## or: psych::describe(ds_vb_18) ``` ``` ## code order training updating binding reasoning ## 2 : 1 A: 94 control :72 Min. :0.0744 Min. :-0.0272 Min. :0.148 ## 3 : 1 B:103 updating:59 1st Qu.:0.4714 1st Qu.: 0.8250 1st Qu.:0.578 ## 4 : 1 binding :66 Median :0.5872 Median : 1.1923 Median :0.692 ## 5 : 1 Mean :0.5756 Mean : 1.2790 Mean :0.669 ## 6 : 1 3rd Qu.:0.6942 3rd Qu.: 1.6166 3rd Qu.:0.767 ## 7 : 1 Max. :0.9023 Max. : 3.0249 Max. :0.932 ## (Other):191 ``` - Source: https://osf.io/fy5ku/ --- class: small  --- class: small ## Some Example Data: De Simoni & von Bastian (2018, JEP:G) ```r theme_set(theme_bw(base_size = 17)) wm2 <- ds_vb_18 %>% gather(key = "wm_task", value = "wm_performance", binding, updating) ggplot(wm2, aes(x = wm_performance, y = reasoning)) + geom_point(alpha = 0.5) + facet_wrap(~ wm_task, scales = "free_x") ``` <!-- --> --- class: center, middle, inverse # Learning-Goal: # Understand/Interpret Parameters in Linear Regression --- # Linear Regression Model: Does WM predict reasoning? - `\(\bf{y}\)` = vector of reasoning accuracies of length `\(n\)` (*dependent variable*) - `\(\bf{x_{\mbox{WM}}}\)` = vector of WM performance of length `\(n\)` (*independent variable* or *covariate*) `$$y_i = \beta_0x_{0,i}+\beta_{\mbox{WM}}x_{\mbox{WM},i}+\epsilon_i, \ \ i = 1, ..., n, \\ \bf{\epsilon} \sim \mathcal{N}(0, \sigma^2_{\epsilon}),$$` where `\(\bf{x_0}\)` is a vector of 1s of length `\(n\)`. - Errors `\(\bf{\epsilon}\)` are assumed to come from a normal distribution (i.e., uncorrelated). - `\(\beta_0\)` and `\(\beta_{\mbox{WM}}\)` are scalars (i.e., of length 1) and called *regression coefficients* or *parameters* ( `\(\sigma^2_{\epsilon}\)` is also a parameter). `\(\beta_0\)` is also known as the *intercept*. -- ****** In matrix form this model can be expressed as: `$$\bf{y} = \bf{X}\bf{\beta}+\bf{\epsilon}$$` --- class: small # Linear Model in R .pull-left2[ ```r m1 <- lm(reasoning ~ binding, ds_vb_18) summary(m1) ``` ``` ## ## Call: ## lm(formula = reasoning ~ binding, data = ds_vb_18) ## ## Residuals: ## Min 1Q Median 3Q Max ## -0.3986 -0.0767 0.0135 0.0873 0.2332 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 0.5352 0.0187 28.58 < 2e-16 *** ## binding 0.1047 0.0132 7.95 1.5e-13 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 0.115 on 195 degrees of freedom ## Multiple R-squared: 0.245, Adjusted R-squared: 0.241 ## F-statistic: 63.2 on 1 and 195 DF, p-value: 1.48e-13 ``` ] -- .pull-right2[ ```r coef(m1) ``` ``` ## (Intercept) binding ## 0.5352 0.1047 ``` ```r ggplot(ds_vb_18, aes(x = binding, y = reasoning)) + geom_point(alpha = 0.2) + geom_smooth(method = "lm", se = FALSE) ``` <!-- --> ] --- class: small # Linear Model in R (Centered) .pull-left2[ ```r ds_vb_18 <- ds_vb_18 %>% mutate(binding_c = binding - mean(binding), updating_c = updating - mean(updating)) m2 <- lm(reasoning ~ binding_c, ds_vb_18) summary(m2) ``` ``` ## ## Call: ## lm(formula = reasoning ~ binding_c, data = ds_vb_18) ## ## Residuals: ## Min 1Q Median 3Q Max ## -0.3986 -0.0767 0.0135 0.0873 0.2332 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 0.66914 0.00817 81.88 < 2e-16 *** ## binding_c 0.10472 0.01317 7.95 1.5e-13 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 0.115 on 195 degrees of freedom ## Multiple R-squared: 0.245, Adjusted R-squared: 0.241 ## F-statistic: 63.2 on 1 and 195 DF, p-value: 1.48e-13 ``` ] .pull-right2[ ```r coef(m2) ``` ``` ## (Intercept) binding_c ## 0.6691 0.1047 ``` ```r ggplot(ds_vb_18, aes(x = binding_c, y = reasoning)) + geom_point(alpha = 0.2) + geom_smooth(method = "lm", se = FALSE) ``` <!-- --> ] --- class: small # Linear Model in R (Scaled) .pull-left2[ ```r m2b <- lm(reasoning ~ scale(binding), ds_vb_18) summary(m2b) ``` ``` ## ## Call: ## lm(formula = reasoning ~ scale(binding), data = ds_vb_18) ## ## Residuals: ## Min 1Q Median 3Q Max ## -0.3986 -0.0767 0.0135 0.0873 0.2332 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 0.66914 0.00817 81.88 < 2e-16 *** ## scale(binding) 0.06512 0.00819 7.95 1.5e-13 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 0.115 on 195 degrees of freedom ## Multiple R-squared: 0.245, Adjusted R-squared: 0.241 ## F-statistic: 63.2 on 1 and 195 DF, p-value: 1.48e-13 ``` - `scale()` calculates z-transformation (minus mean divided by SD) and can be used within formulas - **Warning:** `scale()` does not return vector but matrix ] .pull-right2[ ```r coef(m2b) ``` ``` ## (Intercept) scale(binding) ## 0.66914 0.06512 ``` ```r ggplot(ds_vb_18, aes(x = scale(binding), y = reasoning)) + geom_point(alpha = 0.2) + geom_smooth(method = "lm", se = FALSE) ``` <!-- --> ] --- ```r GGally::ggscatmat(ds_vb_18[, 4:6], alpha = 0.3) ``` <!-- --> --- class: small ### Multiple Regression ```r m3 <- lm(reasoning ~ binding_c + updating_c, ds_vb_18) summary(m3) ``` ``` ## ## Call: ## lm(formula = reasoning ~ binding_c + updating_c, data = ds_vb_18) ## ## Residuals: ## Min 1Q Median 3Q Max ## -0.29348 -0.05412 0.00319 0.06944 0.22621 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 0.66914 0.00693 96.56 < 2e-16 *** ## binding_c 0.02025 0.01474 1.37 0.17 ## updating_c 0.47673 0.05428 8.78 8.3e-16 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 0.0973 on 194 degrees of freedom ## Multiple R-squared: 0.46, Adjusted R-squared: 0.454 ## F-statistic: 82.5 on 2 and 194 DF, p-value: <2e-16 ``` -- - Why is estimate of `binding_c` reduced by > 80% (was 0.1047)? -- - In multiple regression, parameter estimates reflect effect after "controlling" for influence of all other predictors! - What remains from a specific effect, if others variables are taken into account? --- class: small ### "Controlling"? ```r ## Full Model summary(m3)$coefficients %>% zapsmall(6) ``` ``` ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 0.6691 0.0069 96.557 0.0000 ## binding_c 0.0202 0.0147 1.374 0.1711 ## updating_c 0.4767 0.0543 8.783 0.0000 ``` -- ```r ## binding m3_b <- lm(binding_c ~ updating_c, ds_vb_18) ds_vb_18$resid_b <- residuals(m3_b) summary(lm(reasoning ~ 0 + resid_b, ds_vb_18))$coefficients ``` ``` ## Estimate Std. Error t value Pr(>|t|) ## resid_b 0.02025 0.1036 0.1955 0.8452 ``` -- ```r ## updating m3_u <- lm(updating_c ~ binding_c, ds_vb_18) ds_vb_18$resid_u <- residuals(m3_u) summary(lm(reasoning ~ 0 + resid_u, ds_vb_18))$coefficients ``` ``` ## Estimate Std. Error t value Pr(>|t|) ## resid_u 0.4767 0.38 1.255 0.2111 ``` --- class: inline-grey ### Exercise 1.1 (Multiple Regression) - Open `exercises/exercise_1.1_MR.Rmd` (or the exercise handout or `exercises/exercise_1.1_MR.nb.html` for a nicer format of the instruction). - Follow text and try to solve two small tasks helping you to get comfortable with multiple regression. - The data comes with `R`, `data(USArrests)`, and contains statistics, in arrests per 100,000 residents, for assault, murder, and rape in each of the 50 US states in 1973. Also given is the percent of the population living in urban areas. - Research question: *Which variables predict murder rates and to what degree?* - Task 1: Create one simple linear regression model with murder rate as dependent variable and each of the other variables as independent variable. - Which of the three variables shows a significant effect on murder rates? - Task 2: Create one overall model with murder rate as dependent variable and the three other variables as independent variables (without interactions). - Which of the three variables now shows a significant effect on murder rates? If there are differences, how can these differences be explained? --- ## Interim Summary: Linear and Multiple Regression - Linear regression summarizes linear relationship with regression parameters, allows prediction - Intercept (usually `\(b_0\)` or `\(\beta_0\)`): expected value of DV at origin of IVs (i.e., when IVs are 0) - Slopes (e.g., `\(b_1\)` or `\(\beta_1\)`): gradient of line, amount by which `\(y\)` (DV) increases if `\(x\)` (IV) increases by 1 -- - Linear transformation of variables does not change significance of slope or adequacy of model - transformation can simplify interpretation of estimates and comparison across scales - in 1 predictor case: if both variables z-transformed, slope = Pearson correlation -- - Regression allows including several predictors - Potential problems **multicollinearity** (i.e., predictors correlated among each other) - imprecise regression estimates - increases standard errors - Potentially suppression effects or other regression problems can occur. - Parameter estimates reflects unique contribution of each predictor (i.e., beyond what is explained by other variables or controlling for other variables) -- ### Take-Home Message - If you fit a regression type model, always attempt to understand what the estimated coefficients mean. Without being able to explain what the parameters mean, you do not really know what you are doing. - Correlations among predictors cannot be ignored and are potentially dangerous. They affect which conclusions can be drawn from data and model. --- .pull-left[ ### Income and Prestige ```r data("Prestige", package = "carData") glimpse(Prestige, width = 50) ``` ``` ## Observations: 102 ## Variables: 6 ## $ education <dbl> 13.11, 12.26, 12.77, 11.42,... ## $ income <int> 12351, 25879, 9271, 8865, 8... ## $ women <dbl> 11.16, 4.02, 15.70, 9.11, 1... ## $ prestige <dbl> 68.8, 69.1, 63.4, 56.8, 73.... ## $ census <int> 1113, 1130, 1171, 1175, 211... ## $ type <fct> prof, prof, prof, prof, pro... ``` - Occupations in Canada in 1971 - Each data point is one occupation - `income`: Average income of incumbents, dollars, in 1971. - `education`: Average education of occupational incumbents, years, in 1971. - `prestige`: Pineo-Porter prestige score for occupation, from a social survey conducted in the mid-1960s (from 0 to 100). ] -- .pull-right[ ```r GGally::ggscatmat(Prestige, columns = c(1, 4, 2)) ``` <!-- --> ] --- ```r mp_1 <- lm(income ~ education + prestige, Prestige) summary(mp_1) ``` ``` ## ## Call: ## lm(formula = income ~ education + prestige, data = Prestige) ## ## Residuals: ## Min 1Q Median 3Q Max ## -6649 -1453 102 1234 14901 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) -714.7 1257.5 -0.57 0.57 ## education -169.6 207.0 -0.82 0.41 ## prestige 199.3 32.8 6.07 2.4e-08 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 2990 on 99 degrees of freedom ## Multiple R-squared: 0.514, Adjusted R-squared: 0.505 ## F-statistic: 52.4 on 2 and 99 DF, p-value: 2.96e-16 ``` --- ```r mp_1b <- lm(income ~ scale(education) + scale(prestige), Prestige) summary(mp_1b) ``` ``` ## ## Call: ## lm(formula = income ~ scale(education) + scale(prestige), data = Prestige) ## ## Residuals: ## Min 1Q Median 3Q Max ## -6649 -1453 102 1234 14901 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 6798 296 22.97 < 2e-16 *** ## scale(education) -463 565 -0.82 0.41 ## scale(prestige) 3429 565 6.07 2.4e-08 *** ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 2990 on 99 degrees of freedom ## Multiple R-squared: 0.514, Adjusted R-squared: 0.505 ## F-statistic: 52.4 on 2 and 99 DF, p-value: 2.96e-16 ``` --- ```r mp_3 <- lm(income ~ scale(education)*scale(prestige), Prestige) summary(mp_3) ``` ``` ## ## Call: ## lm(formula = income ~ scale(education) * scale(prestige), data = Prestige) ## ## Residuals: ## Min 1Q Median 3Q Max ## -7107 -1606 130 1036 15346 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 6143 400 15.36 <2e-16 *** ## scale(education) -596 555 -1.07 0.29 ## scale(prestige) 3215 559 5.75 1e-07 *** ## scale(education):scale(prestige) 778 328 2.37 0.02 * ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 2920 on 98 degrees of freedom ## Multiple R-squared: 0.541, Adjusted R-squared: 0.527 ## F-statistic: 38.5 on 3 and 98 DF, p-value: <2e-16 ``` --- class: small - Interaction: Effect of one variable depends on value of another variable! - For interactions of continuous covariates, it is common to inspect effect conditional at mean and plus/minus 1 SD from mean (Cohen et al., 2002). -- ```r library("emmeans") get_z_positions <- function(x, at = c(-1, 0, 1), round = 2) { return(round(mean(x) + at*sd(x), round)) } pres_t <- emtrends(mp_3, specs = "education", var = "prestige", at = list(education = get_z_positions(Prestige$education))) %>% summary ``` .pull-left[ ```r pres_t ``` ``` ## education prestige.trend SE df lower.CL upper.CL ## 8.01 142 40.2 98 61.8 222 ## 10.74 187 32.5 98 122.4 251 ## 13.47 232 35.0 98 162.8 302 ## ## Confidence level used: 0.95 ``` ```r pres_t$prestige.trend * sd(Prestige$prestige) ``` ``` ## [1] 2437 3216 3995 ``` ] .pull-right[ ```r summary(mp_3)$coefficients %>% zapsmall ``` ``` ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 6143 400 15 0 ## scale(education) -596 555 -1 0 ## scale(prestige) 3215 559 6 0 ## scale(education):scale(prestige) 778 328 2 0 ``` ] --- - 2-way interaction always allows 2 interpretations: One variable is focal, other is conditioning variable. ```r edu_t <- emtrends(mp_3, specs = "prestige", var = "education", at = list(prestige = get_z_positions(Prestige$prestige))) %>% summary edu_t ``` ``` ## prestige education.trend SE df lower.CL upper.CL ## 29.6 -503.7 246 98 -993 -14.5 ## 46.8 -218.5 203 98 -622 185.1 ## 64.0 66.9 226 98 -381 514.5 ## ## Confidence level used: 0.95 ``` .pull-left[ ```r edu_t$education.trend * sd(Prestige$education) ``` ``` ## [1] -1374.3 -596.2 182.4 ``` ] .pull-right[ ```r summary(mp_3)$coefficients %>% zapsmall ``` ``` ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 6143 400 15 0 ## scale(education) -596 555 -1 0 ## scale(prestige) 3215 559 6 0 ## scale(education):scale(prestige) 778 328 2 0 ``` ] --- class: small - Additionally, we should inspect interaction by transforming one variable into categorical variable! ```r Prestige <- Prestige %>% mutate(edu_cat = cut(education, 3, labels = c("low", "medium", "high")), prestige_cat = cut(prestige, 3, labels = c("low", "medium", "high")) ) ``` .pull-left[ <!-- --> ] .pull-right[ <!-- --> ] --- class: inline-grey ## Formula Interface for Statistical Models: `~` Allows symbolic specification of statistical model, e.g. linear models: `lm(reasoning ~ binding, ds_vb_18)` Everything to the left of `~` is the dependent variable: ```r y ~ x # univariate model cbind(y1, y2, y3) ~ x # multivariate model ~ x # one sided formula ``` Independent variables are to the right of the `~`: | Formula | | Interpretation | | ------------------------|---|----------------------------------| | `~ x` or `~1+x` || Intercept and main effect of `x` | | ` ~ x-1` or `~0 + x` || Only main effect of `x` and no intercept (questionable) | | `~ x+y` || Main effects of `x` and `y`| | `~ x:y` || Interaction between `x` and `y` (and no main effect) | | `~ x*y` or `~ x+y+x:y` || Main effects and interaction between `x` and `y` | --- class: small ## Exercise 1.2: How many Parameters in each Model? (Continuous) ```r lm(reasoning ~ binding + updating, ds_vb_18) # a lm(reasoning ~ binding : updating, ds_vb_18) # b lm(reasoning ~ 0 + binding:updating, ds_vb_18) # c lm(reasoning ~ binding*updating, ds_vb_18) # d lm(reasoning ~ 0+binding*updating, ds_vb_18) # e ``` -- .pull-left[ ```r coef(lm(reasoning ~ binding + updating, ds_vb_18)) ``` ``` ## (Intercept) binding updating ## 0.36883 0.02025 0.47673 ``` ```r coef(lm(reasoning ~ binding : updating, ds_vb_18)) ``` ``` ## (Intercept) binding:updating ## 0.5565 0.1400 ``` ```r coef(lm(reasoning ~ 0+binding:updating, ds_vb_18)) ``` ``` ## binding:updating ## 0.6208 ``` ] .pull-right[ ```r coef(lm(reasoning ~ binding*updating, ds_vb_18)) ``` ``` ## (Intercept) binding ## 0.34385 0.04942 ## updating binding:updating ## 0.51703 -0.04416 ``` ```r coef(lm(reasoning ~ 0+binding*updating, ds_vb_18)) ``` ``` ## binding updating ## 0.3300 1.0788 ## binding:updating ## -0.4746 ``` ] --- ### Interim Summary - A linear model attempts to describe data generating process of DV using IVs and distributional assumptions (e.g., normal residuals) - R formula interface allows specification in symbolic form - Regression equation allows predictions for arbitrary values of IVs -- - Linear transformation of variables affects interpretation of estimates, but not overall adequacy of model - Multiple regression estimates coefficients while "controlling" for other IVs - Multicollinearity affects estimates and their precision -- - Interactions test if effect of one variable depend on value of other variable (i.e., magnitude of effect of one variable changes conditional on value of other variable) - Interpretation of interaction often not obvious (e.g., 2-way interaction has two equally valid possibilities, context matters) - If model involves interaction, estimates of lower order effects are effect if other variables are 0 (centring of variables often provides reasonable interpretation if interactions are involved) -- ### Take-Home Message - Interactions of continuous variables are not trivial. Avoid if not absolutely necessary. - If interaction is included, it affects the involved lower-order effects as well! --- class: center, middle, inverse # Categorical Covariates --- class: small # Categorical Covariates `R` modeling functions behave differently for numerical and categorical covariates. It is important to always know of what type variables are. Use `str()` on a `data.frame` (or `glimpse()` after loading the `tidyverse`) to obtain information regarding the structure, including variable types: ```r str(ds_vb_18) ## alternatively tibble::glimpse(ds_vb_18) ``` ``` ## Classes 'tbl_df', 'tbl' and 'data.frame': 197 obs. of 10 variables: ## $ code : Factor w/ 197 levels "2","3","4","5",..: 1 2 3 4 5 6 7 8 9 10 ... ## $ order : Factor w/ 2 levels "A","B": 2 2 1 2 2 2 2 1 2 1 ... ## $ training : Factor w/ 3 levels "control","updating",..: 1 1 2 3 3 3 3 2 2 1 ... ## $ updating : num 0.752 0.581 0.702 0.647 0.232 ... ## $ binding : num 0.785 1.955 1.463 1.915 0.545 ... ## $ reasoning : num 0.775 0.691 0.689 0.752 0.482 ... ## $ binding_c : num -0.494 0.676 0.184 0.636 -0.734 ... ## $ updating_c: num 0.17589 0.00531 0.1262 0.07172 -0.34365 ... ## $ resid_b : num -0.9166 0.6634 -0.1189 0.4638 0.0914 ... ## $ resid_u : num 0.2634 -0.1145 0.0936 -0.041 -0.2136 ... ``` - Numerical covariates are `int` or `num`. - Categorical covariates are `Factor` (or `character`). -- **Make sure all categorical variables are factors before adding them to a statistical model!** --- class: small ## Models with Categorical Covariates We might be interested in testing whether reasoning performance differs between both orders in which tasks were presented. .pull-left2[ ```r m3 <- lm(reasoning ~ order, ds_vb_18) summary(m3) ``` ``` ## ## Call: ## lm(formula = reasoning ~ order, data = ds_vb_18) ## ## Residuals: ## Min 1Q Median 3Q Max ## -0.5223 -0.0901 0.0230 0.0986 0.2622 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 0.66992 0.01361 49.22 <2e-16 *** ## orderB -0.00149 0.01883 -0.08 0.94 ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 0.132 on 195 degrees of freedom ## Multiple R-squared: 3.22e-05, Adjusted R-squared: -0.0051 ## F-statistic: 0.00628 on 1 and 195 DF, p-value: 0.937 ``` ] -- .pull-right2[ ```r mean(ds_vb_18$reasoning) ``` ``` ## [1] 0.6691 ``` ```r ds_vb_18 %>% group_by(order) %>% summarise(m = mean(reasoning)) ``` ``` ## # A tibble: 2 x 2 ## order m ## <fct> <dbl> ## 1 A 0.6699 ## 2 B 0.6684 ``` ```r ds_vb_18 %>% group_by(order) %>% summarise(m=mean(reasoning)) %>% {.$m[2] - .$m[1]} ``` ``` ## [1] -0.001492 ``` ] --- class: small # R and Categorical Covariates - `model.matrix()` transforms categorical covariates into numerical variables that can be used for fitting using a specific contrast function (see `?contr.sum`). - `model.matrix()` is called internally by `lm` and other modeling functions and creates numerical IVs (i.e., `\(X\)` in regression equation) and usually does not need to be called by user. .pull-left[ ```r model.matrix(reasoning ~ order, ds_vb_18[1:5,]) ``` ``` ## (Intercept) orderB ## 1 1 1 ## 2 1 1 ## 3 1 0 ## 4 1 1 ## 5 1 1 ## attr(,"assign") ## [1] 0 1 ## attr(,"contrasts") ## attr(,"contrasts")$order ## [1] "contr.treatment" ``` ] --- class: small # R and Categorical Covariates - `model.matrix()` transforms categorical covariates into numerical variables that can be used for fitting using a specific contrast function (see `?contr.sum`). - `model.matrix()` is called internally by `lm` and other modeling functions and creates numerical IVs (i.e., `\(X\)` in regression equation) and usually does not need to be called by user. .pull-left[ ```r model.matrix(reasoning ~ order, ds_vb_18[1:5,]) ``` ``` ## (Intercept) orderB ## 1 1 1 ## 2 1 1 ## 3 1 0 ## 4 1 1 ## 5 1 1 ## attr(,"assign") ## [1] 0 1 ## attr(,"contrasts") ## attr(,"contrasts")$order ## [1] "contr.treatment" ``` ```r afex::set_sum_contrasts() ``` ``` ## setting contr.sum globally: options(contrasts=c('contr.sum', 'contr.poly')) ``` ] .pull-right[ ```r model.matrix(reasoning ~ order, ds_vb_18[1:5,]) ``` ``` ## (Intercept) order1 ## 1 1 -1 ## 2 1 -1 ## 3 1 1 ## 4 1 -1 ## 5 1 -1 ## attr(,"assign") ## [1] 0 1 ## attr(,"contrasts") ## attr(,"contrasts")$order ## [1] "contr.sum" ``` ] --- class: small ## Models with Categorical Covariates II Same model as before, but with sum/effects contrasts. .pull-left2[ ```r m4 <- lm(reasoning ~ order, ds_vb_18) summary(m4) ``` ``` ## ## Call: ## lm(formula = reasoning ~ order, data = ds_vb_18) ## ## Residuals: ## Min 1Q Median 3Q Max ## -0.5223 -0.0901 0.0230 0.0986 0.2622 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 0.669175 0.009413 71.09 <2e-16 *** ## order1 0.000746 0.009413 0.08 0.94 ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 0.132 on 195 degrees of freedom ## Multiple R-squared: 3.22e-05, Adjusted R-squared: -0.0051 ## F-statistic: 0.00628 on 1 and 195 DF, p-value: 0.937 ``` ] .pull-right2[ ```r mean(ds_vb_18$reasoning) ``` ``` ## [1] 0.6691 ``` ```r ds_vb_18 %>% group_by(order) %>% summarise(m = mean(reasoning)) ``` ``` ## # A tibble: 2 x 2 ## order m ## <fct> <dbl> ## 1 A 0.6699 ## 2 B 0.6684 ``` ```r ds_vb_18 %>% group_by(order) %>% summarise(m=mean(reasoning)) %>% summarise(mean(m)) ``` ``` ## # A tibble: 1 x 1 ## `mean(m)` ## <dbl> ## 1 0.6692 ``` ] --- class: small # Models with Categorical Covariates and Interactions ```r afex::set_default_contrasts() # or set_treatment_contrasts() ``` ``` ## setting contr.treatment globally: options(contrasts=c('contr.treatment', 'contr.poly')) ``` .pull-left2[ ```r m5 <- lm(reasoning ~ order*training, ds_vb_18) coef(m5) ``` ``` ## (Intercept) orderB ## 0.658263 0.031830 ## trainingupdating trainingbinding ## 0.042523 -0.002962 ## orderB:trainingupdating orderB:trainingbinding ## -0.067247 -0.040123 ``` ] .pull-right2[ ```r ds_vb_18 %>% group_by(order,training) %>% summarise(mean(reasoning)) ``` ``` ## # A tibble: 6 x 3 ## # Groups: order [2] ## order training `mean(reasoning)` ## <fct> <fct> <dbl> ## 1 A control 0.6583 ## 2 A updating 0.7008 ## 3 A binding 0.6553 ## 4 B control 0.6901 ## 5 B updating 0.6654 ## 6 B binding 0.6470 ``` ] --- class: small # Models with Categorical Covariates and Interactions II ```r afex::set_sum_contrasts() # or set_effects_contrasts() or set_deviation_contrasts() ``` ``` ## setting contr.sum globally: options(contrasts=c('contr.sum', 'contr.poly')) ``` .pull-left2[ ```r m6 <- lm(reasoning ~ order*training, ds_vb_18) coef(m6) ``` ``` ## (Intercept) order1 training1 training2 ## 0.669470 0.001980 0.004708 0.013608 ## order1:training1 order1:training2 ## -0.017895 0.015729 ``` ] .pull-right2[ ```r ds_vb_18 %>% group_by(order,training) %>% summarise(m=mean(reasoning)) %>% ungroup() %>% summarise(mean(m)) ``` ``` ## # A tibble: 1 x 1 ## `mean(m)` ## <dbl> ## 1 0.6695 ``` ] --- ## Exercise 1.2: How many Parameters in each Model? (Categorical) .pull-left3[ ```r reasoning ~ binding + updating # a: 3 reasoning ~ binding : updating, # b: 2 reasoning ~ 0 + binding:updating # c: 1 reasoning ~ binding*updating # d: 4 reasoning ~ 0+binding*updating # e: 3 reasoning ~ binding # f: 2 reasoning ~ 0 + binding # g: 1 ``` ] -- .pull-right3[ ```r lm(reasoning ~ order, ds_vb_18) # a lm(reasoning ~ 0+order, ds_vb_18) # b lm(reasoning ~ order+training, ds_vb_18) # c lm(reasoning ~ 0+order+training, ds_vb_18) # d lm(reasoning ~ order:training, ds_vb_18) # e lm(reasoning ~ 0+order:training, ds_vb_18) # f lm(reasoning ~ order*training, ds_vb_18) # g lm(reasoning ~ 0+order*training, ds_vb_18) # h lm(reasoning ~ order+order:training, ds_vb_18)# i ``` ```r levels(ds_vb_18$order) ``` ``` ## [1] "A" "B" ``` ```r levels(ds_vb_18$training) ``` ``` ## [1] "control" "updating" "binding" ``` ] --- class: small # Beware of Formulas with Categorical Variables ```r coef(lm(reasoning ~ order, ds_vb_18)) # a: 2 ``` ``` ## (Intercept) order1 ## 0.669175 0.000746 ``` ```r coef(lm(reasoning ~ 0+order, ds_vb_18)) # b: 2 ``` ``` ## orderA orderB ## 0.6699 0.6684 ``` ```r coef(lm(reasoning ~ order+training, ds_vb_18)) # c: 4 ``` ``` ## (Intercept) order1 training1 training2 ## 0.6694630 0.0008813 0.0056482 0.0127591 ``` ```r coef(lm(reasoning ~ 0+order+training, ds_vb_18)) # d: 4 ``` ``` ## orderA orderB training1 training2 ## 0.670344 0.668582 0.005648 0.012759 ``` --- class: small ```r coef(lm(reasoning ~ order:training, ds_vb_18)) # e: 7 ``` ``` ## (Intercept) orderA:trainingcontrol orderB:trainingcontrol orderA:trainingupdating ## 0.647008 0.011255 0.043085 0.053779 ## orderB:trainingupdating orderA:trainingbinding orderB:trainingbinding ## 0.018361 0.008293 NA ``` ```r coef(lm(reasoning ~ 0+order:training, ds_vb_18)) # f: 6 ``` ``` ## orderA:trainingcontrol orderB:trainingcontrol orderA:trainingupdating orderB:trainingupdating ## 0.6583 0.6901 0.7008 0.6654 ## orderA:trainingbinding orderB:trainingbinding ## 0.6553 0.6470 ``` ```r coef(lm(reasoning ~ order*training, ds_vb_18)) # g: 6 coef(lm(reasoning ~ 0+order*training, ds_vb_18)) # h: 6 coef(lm(reasoning ~ order+order:training, ds_vb_18)) # i: 6 ``` --- class: inline-grey ### Interim Summary - The `R` `formula` interface allows symbolic specification of statistical models. - `+` = main effects - `:` = interaction - `*` = main effects plus interaction - `0+`/`-1` = no intercept -- - Categorical variables are transformed into numerical variables using contrast functions (via `model.matrix()`; see Cohen et al., 2002) - If models include interactions, orthogonal contrasts (e.g., `contr.sum`) in which the intercept corresponds to the (unweighted) grand mean should be used: `afex::set_sum_contrasts()` - Dummy/treatment contrasts (`R` default) lead to simple effects for lower order effects. - **Coding only affects interpretation of parameters/tests not overall model fit.** -- - For models with solely numerical independent variables, suppressing intercept works as expected. - For models with categorical independent variables, suppressing intercept or other lower-order effects often leads to very surprising results (and should generally be avoided). -- ### Take-Home Message - Make sure all categorical variables are factors before adding them to a statistical model! - Factors are transformed into numerical covariates via contrast function, which can have surprising consequencues. - Default `R` 'treatment' contrasts are not appropriate for interactions (as 0 corresponds to first factor level). --- class: center, middle, inverse # Tests of Terms/Effects --- class: small .pull-left2[ ```r afex::set_sum_contrasts() m6 <- lm(reasoning ~ order*training, ds_vb_18) summary(m6) ``` ``` ## ## Call: ## lm(formula = reasoning ~ order * training, data = ds_vb_18) ## ## Residuals: ## Min 1Q Median 3Q Max ## -0.5077 -0.0868 0.0093 0.0932 0.2739 ## ## Coefficients: ## Estimate Std. Error t value Pr(>|t|) ## (Intercept) 0.66947 0.00944 70.91 <2e-16 *** ## order1 0.00198 0.00944 0.21 0.83 ## training1 0.00471 0.01304 0.36 0.72 ## training2 0.01361 0.01370 0.99 0.32 ## order1:training1 -0.01790 0.01304 -1.37 0.17 ## order1:training2 0.01573 0.01370 1.15 0.25 ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ## ## Residual standard error: 0.132 on 191 degrees of freedom ## Multiple R-squared: 0.0212, Adjusted R-squared: -0.00443 ## F-statistic: 0.827 on 5 and 191 DF, p-value: 0.532 ``` ] .pull-right2[ ```r ds_vb_18 %>% group_by(order, training) %>% summarise(m=mean(reasoning)) %>% ungroup() %>% summarise(mean(m)) ``` ``` ## # A tibble: 1 x 1 ## `mean(m)` ## <dbl> ## 1 0.6695 ``` ] -- .pull-left2[ **If model includes factors with more than 2 levels and interactions, we generally cannot interpret coefficients directly!** ] --- # `car::Anova()` is the Solution ```r require(car) # Companion to Applied Regression (Fox & Weisberg, 2011) Anova(m6, type = 3) ``` ``` ## Anova Table (Type III tests) ## ## Response: reasoning ## Sum Sq Df F value Pr(>F) ## (Intercept) 87.5 1 5028.12 <2e-16 *** ## order 0.0 1 0.04 0.83 ## training 0.0 2 0.99 0.37 ## order:training 0.0 2 1.08 0.34 ## Residuals 3.3 191 ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ``` -- - Type II and III tests equivalent for balanced designs (i.e., equal group sizes) and highest-order effect. - Type III tests require orthogonal contrasts (e.g.,`contr.sum`); recommended: - For experimental designs in which imbalance is completely random and not structural, - Complete cross-over interactions (i.e., main effects in presence of interaction) possible. - Type II are more appropriate if imbalance is structural (i.e., observational data). --- class: small, inline-grey # `emmeans` for Follow-Up/Post-Hoc Tests I .pull-left[ ```r library("emmeans") (emms <- emmeans(m6, "training")) ``` ``` ## training emmean SE df lower.CL upper.CL ## control 0.674 0.0156 191 0.643 0.705 ## updating 0.683 0.0172 191 0.649 0.717 ## binding 0.651 0.0162 191 0.619 0.683 ## ## Results are averaged over the levels of: order ## Confidence level used: 0.95 ``` `emmeans` returns estimated marginal means (or least-square means for linear regression) for model terms (e.g., `emmeans(m6, c("training", "order"))`). One can specify arbitrary contrasts on marginal means (e.g., `contrast()`). ] -- .pull-right[ ```r pairs(emms, adjust='holm') ``` ``` ## contrast estimate SE df t.ratio p.value ## control - updating -0.0089 0.0232 191 -0.384 0.7017 ## control - binding 0.0230 0.0225 191 1.023 0.6152 ## updating - binding 0.0319 0.0237 191 1.349 0.5365 ## ## Results are averaged over the levels of: order ## P value adjustment: holm method for 3 tests ``` ] --- class: small, inline-grey # `emmeans` for Follow-Up/Post-Hoc Tests II .pull-left[ ```r library("emmeans") (emms <- emmeans(m6, "training")) ``` ``` ## training emmean SE df lower.CL upper.CL ## control 0.674 0.0156 191 0.643 0.705 ## updating 0.683 0.0172 191 0.649 0.717 ## binding 0.651 0.0162 191 0.619 0.683 ## ## Results are averaged over the levels of: order ## Confidence level used: 0.95 ``` `emmeans` returns estimated marginal means (or least-square means for linear regression) for model terms (e.g., `emmeans(m6, c("training", "order"))`). One can specify arbitrary contrasts on marginal means (e.g., `contrast()`). ] .pull-right[ ```r cs <- list( "c-u" = c(1, -1, 0), "c-wm" = c(1, -0.5, -0.5), "c-uub" = c(1, -0.75, -0.25) ) contrast(emms, cs, adjust = "holm") ``` ``` ## contrast estimate SE df t.ratio p.value ## c-u -0.008900 0.0232 191 -0.384 1.0000 ## c-wm 0.007062 0.0196 191 0.361 1.0000 ## c-uub -0.000919 0.0206 191 -0.045 1.0000 ## ## Results are averaged over the levels of: order ## P value adjustment: holm method for 3 tests ``` ] --- class: inline-grey # Summary: Analysis with Statistical Models in R 1. Identify probability distribution of data (more correct: of residuals/conditional distribution) 2. Make sure variables are of correct type via `str()` 3. Set appropriate contrasts (orthogonal contrasts if model includes interaction): `afex::set_sum_contrasts()` 4. Describe statistical model using `formula` 4. Fit model: pass `formula` and `data.frame` to corresponding modeling function (e.g., `lm()`, `glm()`) 4. Check model fit (e.g., inspect residuals) 5. Test terms (i.e., main effects and interactions): Pass fitted model to `car::Anova()` Note: parameter estimates (e.g., `coef` or `summary`) are often not informative for models involving categorical variables, especially if the variables have more than three levels. 7. Follow-up tests: - Estimated marginal means: Pass fitted model to `emmeans::emmeans()` (formerly `lsmeans`) - Specify specific contrasts on estimated marginal means (e.g., `contrast()`, `pairs()`) -- `afex` combines correct coding (3.), fitting (5.) and testing (7.): - ANOVAs: `afex::aov_car()`, `afex::aov_ez()`, or `afex::aov_4()` - (Generalized) linear mixed-effects models: `afex::mixed()` --- class: small # ANOVAs with afex .pull-left[ `afex::aov_car()` allows specification of ANOVA using formula, but requires specification of participant id in `Error()` term. ```r library("afex") (a1 <- aov_car(reasoning ~ order + Error(code), ds_vb_18)) ``` ``` # Anova Table (Type 3 tests) # # Response: reasoning # Effect df MSE F ges p.value # 1 order 1, 195 0.02 0.01 <.0001 .94 # --- # Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '+' 0.1 ' ' 1 ``` ] -- .pull-right[ ```r (a2 <- aov_car(reasoning ~ order*training + Error(code), ds_vb_18)) ``` ``` ## Anova Table (Type 3 tests) ## ## Response: reasoning ## Effect df MSE F ges p.value ## 1 order 1, 191 0.02 0.04 .0002 .83 ## 2 training 2, 191 0.02 0.99 .01 .37 ## 3 order:training 2, 191 0.02 1.08 .01 .34 ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '+' 0.1 ' ' 1 ``` ] --- ## Plotting with `afex_plot` .pull-left[ ```r (a2 <- aov_car(reasoning ~ order*training + Error(code), ds_vb_18)) ``` ``` ## Anova Table (Type 3 tests) ## ## Response: reasoning ## Effect df MSE F ges p.value ## 1 order 1, 191 0.02 0.04 .0002 .83 ## 2 training 2, 191 0.02 0.99 .01 .37 ## 3 order:training 2, 191 0.02 1.08 .01 .34 ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '+' 0.1 ' ' 1 ``` for more see: https://cran.r-project.org/package=afex/vignettes/afex_plot_introduction.html ] .pull-right[ ```r afex_plot(a2, "training", "order", data_geom = geom_violin, data_arg = list(width = 0.4)) + theme(legend.position = "bottom") ``` <!-- --> ] --- ### Follow-Up Tests with `afex` - `afex` provides full support for `emmeans` .pull-left[ ```r emmeans(a2, "order") ``` ``` ## NOTE: Results may be misleading due to involvement in interactions ``` ``` ## order emmean SE df lower.CL upper.CL ## A 0.671 0.0137 191 0.644 0.698 ## B 0.667 0.0130 191 0.642 0.693 ## ## Results are averaged over the levels of: training ## Confidence level used: 0.95 ``` ] .pull-right[ ```r emmeans(a2, c("training")) %>% pairs ``` ``` ## NOTE: Results may be misleading due to involvement in interactions ``` ``` ## contrast estimate SE df t.ratio p.value ## control - updating -0.0089 0.0232 191 -0.384 0.9221 ## control - binding 0.0230 0.0225 191 1.023 0.5632 ## updating - binding 0.0319 0.0237 191 1.349 0.3698 ## ## Results are averaged over the levels of: order ## P value adjustment: tukey method for comparing a family of 3 estimates ``` ] --- # Working with Categorical Covariates - When you can, avoid inspecting parameters (i.e., avoid `summary()` or `coef()`) - Parameter estimates are only meaningful for factors with two levels. - Use `car::Anova()` or `afex` for estimating model and testing terms - Use `emmeans` for inspection of results on factor-levels and design cells -- - When models involve categorical variables and interactions, do not forget appropriate contrasts: `afex::set_sum_contrasts()` - Lower-order effects (coefficients and Type 3 tests) are evaluated at the origin of other variables. - `afex` always uses appropriate contrasts per default (without need for setting) -- - Use Type III tests for experimental designs and Type II tests for observational data - Both only differ for unbalanced data; in the way how unbalance is treated -- - Make many plots to understand interactions - Be careful when interpreting main effects in light of interactions --- class: inline-grey # Beyond Linear Models with Normal Residual Distribution Statistical models defined by relationship of covariates and assumption of residual probability distribution. `formula` defines the relationship of covariates, `function` defines distributional assumption. - (1) Most models assume independent data points (i.e., no replicates or repeated measures): - `lm()` linear model (normal distribution of residuals, includes multivariate IVs) - `glm()` generalized linear model (other residual distribution, e.g., binomial, Poisson) - `MASS::rlm()` robust linear model - `MASS::polr()` ordered logistic or probit regression - `MASS::loglm()` log-linear model (for contingency tables) - `nnet::multinom()` models for multinomial data - `ordinal::clm()` cumulative link models for ordinal data -- - (2) Models support repeated-measures usually via random-effects parameters: - `nlme::lme()` linear mixed-effects models (generally superseded by `lme4`) - `lme4::lmer()` linear mixed-effects models (modern implementation) - `lme4::glmer()` generalized linear mixed-effects models - `ordinal::clmm2()` cumulative link mixed models for ordinal data - `mcmcGLMM` Bayesian generalized linear mixed-effects models - `rstanarm::stan_lmer()`/`stan_glmer()` **Bayesian (generalized) linear mixed-effects models** - `brms::brm()` **general framework for formula-based Bayesian models; extremely flexible** --- class: center, middle, inverse # Repeated-Measures --- class: inline-grey # IID Assumption - Ordinary linear regression, between-subjects ANOVA, and basically all standard statistical models share one assumption: Data points are *independent and identically distributed* (*iid*). - Independence assumption refers to residuals: After taking structure of model (i.e., parameters) into account, probability of a data point having a specific value is independent of all other data points. - Identical distribution: All observations sampled from same distribution. -- - For repeated-measures independence assumption often violated (e.g., data points from one participant more likely to be similar to each other). - Violation of independence assumption can have dramatic consequences on statistical inferences from a model (e.g., increased or decreased Type I errors). -- - Three approaches for dealing with repeated-measures (e.g., Gelman & Hill, 2007): 1. *Complete pooling*: Ignore dependency in data (often not appropriate, results likely biased, not trustworthy) 2. *No pooling*: Two step procedure. 1. Separate data based on factor producing dependency and calculate separate statistical model for each subset. 2. Analysis of distribution of estimates from step 1. (Prone to overfitting which decreases precision of parameter estimates; estimation error accumulates in step 2; combination and analysis of individual estimates can be non-trivial if interest is in more than 1 parameter) 3. *Partial pooling*: Analyse data jointly while taking dependency into account (gold standard, e.g., mixed models, hierarchical models) --- class: small ### Complete Pooling - 1 set of parameters `\(\Theta\)` for data *n*, usually aggregated across participants - Likelihood: `\(ln(L({\bf n} \mid \Theta))\)` - Easy to implement - Ignores individual variability -- ### No Pooling - 1 set of parameters `\(\Theta_i\)` for each individual data set `\({\bf n_i}\)` - Likelihood: `\(\sum_{i = 1}^N ln(L({\bf n_i} \mid \Theta_i))\)` - Requires considerable data on the individual level, otherwise parameter prone to overfitting - Results sometimes not easy to combine -- ### Partial Pooling - 1 set of group-level parameters `\({\hat \Theta}\)` and 1 set of parameters `\(\Theta_i\)` for each individual data set `\({\bf n_i}\)` - Likelihood: `\(\sum_{i = 1}^N ln(L({\bf n_i} \mid \Theta_i, {\hat \Theta})) + ln(L(\Theta_i \mid {\hat \Theta}))\)` - Requires distributional assumption for group-level parameters (e.g., individual-level parameters normally distributed around group-level parameters) - Provides on average better estimates for both individual-level and group-level parameters (e.g., [Stein's paradox](https://en.wikipedia.org/wiki/Stein%27s_example)) - Estimation can be difficult in a frequentist setting (alternatives: penalized or restricted maximum likelihood estimation or Bayesian estimation) --- class: small ## Example Data: Productivity Scores for Machines and Workers ```r data("Machines", package = "MEMSS") str(Machines) ``` ``` ## 'data.frame': 54 obs. of 3 variables: ## $ Worker : Factor w/ 6 levels "1","2","3","4",..: 1 1 1 2 2 2 3 3 3 4 ... ## $ Machine: Factor w/ 3 levels "A","B","C": 1 1 1 1 1 1 1 1 1 1 ... ## $ score : num 52 52.8 53.1 51.8 52.8 53.1 60 60.2 58.4 51.1 ... ``` - `Worker`: `Factor` giving unique identifier for the worker. - `Machine`: `Factor` with levels A, B, and C identifying machine brand. - `score`: Overall productivity score taking into account number and quality of components produced. Research question: Do the machines differ in their score? -- ```r library("tidyverse") Machines %>% group_by(Machine) %>% summarise(m = mean(score), se = sd(score)/sqrt(n())) ``` ``` ## # A tibble: 3 x 3 ## Machine m se ## <fct> <dbl> <dbl> ## 1 A 52.36 0.9395 ## 2 B 60.32 1.924 ## 3 C 66.27 0.9886 ``` --- ## Example Data: Productivity Scores for Machines and Workers ```r ggplot(Machines, aes(x = Machine, y = score)) + geom_point() + facet_wrap("Worker") + theme_light() ``` <!-- --> --- ## Productivity Scores for Machines and Workers: Complete Pooling .pull-left[ (1) Aggregate data ```r mach_agg <- Machines %>% group_by(Worker, Machine) %>% summarise(score = mean(score)) ``` (2) Estimate model ```r afex::set_sum_contrasts() mmach <- lm(score ~ Machine, mach_agg) car::Anova(mmach, type = 3) ``` ``` ## Anova Table (Type III tests) ## ## Response: score ## Sum Sq Df F value Pr(>F) ## (Intercept) 64046 1 1727.43 <2e-16 *** ## Machine 585 2 7.89 0.0046 ** ## Residuals 556 15 ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ``` ] .pull-right[ (3) Follow-up test ```r library("emmeans") pairs(emmeans(mmach, "Machine"), adjust = "holm") ``` ``` ## contrast estimate SE df t.ratio p.value ## A - B -7.97 3.52 15 -2.266 0.0773 ## A - C -13.92 3.52 15 -3.959 0.0038 ## B - C -5.95 3.52 15 -1.693 0.1112 ## ## P value adjustment: holm method for 3 tests ``` ] --- class: small ## No Pooling (1) Select worker 1 ```r dm1 <- Machines %>% filter(Worker == "1") ``` (2) Estimate model for worker 1 ```r m1 <- lm(score ~ Machine, dm1) car::Anova(m1, type = 3) ``` ``` ## Anova Table (Type III tests) ## ## Response: score ## Sum Sq Df F value Pr(>F) ## (Intercept) 33391 1 72415 1.8e-13 *** ## Machine 336 2 364 5.4e-07 *** ## Residuals 3 6 ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 ``` (3) Record statistic of interest (e.g., *F*-value or *p*-value). (4) Calculate statistic for other workers and record as well. (5) Investigate distribution of statistics (e.g., all *p*-values smaller .05?). --- class: small ## Simple Partial-Pooling: Repeated-measures ANOVA ```r a1 <- aov_car(score ~ Error(Worker/Machine), Machines) a1 ``` ``` ## Anova Table (Type 3 tests) ## ## Response: score ## Effect df MSE F ges p.value ## 1 Machine 1.60, 8.00 17.77 20.58 *** .51 .0010 ## --- ## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '+' 0.1 ' ' 1 ## ## Sphericity correction method: GG ``` .pull-left[ ```r emmeans(a1, "Machine", adjust = "holm") %>% pairs ``` ``` ## contrast estimate SE df t.ratio p.value ## A - B -7.97 2.18 10 -3.660 0.0111 ## A - C -13.92 2.18 10 -6.393 0.0002 ## B - C -5.95 2.18 10 -2.733 0.0507 ## ## P value adjustment: tukey method for comparing a family of 3 estimates ``` ] -- .pull-right[ ```r emmeans(mmach, "Machine", adjust = "holm") %>% pairs ## complete pooling results ``` ``` ## contrast estimate SE df t.ratio p.value ## A - B -7.97 3.52 15 -2.266 0.0921 ## A - C -13.92 3.52 15 -3.959 0.0034 ## B - C -5.95 3.52 15 -1.693 0.2402 ## ## P value adjustment: tukey method for comparing a family of 3 estimates ``` ] --- class: small # Example Data 2 (Exercise 1.3)  --- class: small, inline-grey - **Skovgaard-Olsen et al. (2016)** - Conditional = *if-then* statement; e.g., If global warning continues, London will be flooded. - Bayesian reasoning often assumes 'the Equation': *P*(if *A* then *B*) = *P*(*B*|*A*) - Our question: Does 'the Equation' hold? - Participants provide idiosyncratic estimates of both *P*(if *A* then *B*) and *P*(*B*|*A*). - ~100 participants recruited via `crowdflower.com` worked on 12 items: > Sophia's scenario: Sophia wishes to find a nice present for her 13-year-old son, Tim, for Christmas. She is running on a tight budget, but she knows that Tim loves participating in live role-playing in the forest and she is really skilled at sewing the orc costumes he needs. Unfortunately, she will not be able to afford the leather parts that such costumes usually have, but she will still be able to make them look nice. -- (1) *P*(*B*|*A*) (`B_given_A`): > Suppose Sophia makes Tim an orc costume. > Under this assumption, how probable is it that the following sentence is true: > Tim will be excited about his present. -- (2) *P*(if *A* then *B*) (`if_A_then_B`): > Could you please rate the probability that the following sentence is true: > IF Sophia makes Tim an orc costume, THEN he will be excited about his present. -- - Exercise 1.3: Analyse the data using the no-pooling approach: `exercises/exercise_1.3_P.Rmd` - Calculate regression between *P*(if *A* then *B*) and *P*(*B*|*A*) separately for each participant. - Do results suggest an overall relationship between the two variables? If so, how strong? --- class: center, middle, inverse # Partial-Pooling for Skovgaard-Olsen et al. (2016) Data Requires Mixed-Models --- ## Summary: Repeated-Measures and Pooling - IID assumption (i.e., independent and identically distributed) shared by most "standard" statistical model. - In case of repeated-measures, independence assumption often violated (e.g., data points from one participant more likely to be similar to each other). - Violation of independence assumption can have dramatic consequences on statistical inferences from a model (e.g., increased or decreased Type I errors). - Three approaches for dealing with repeated-measures (e.g., Gelman & Hill, 2007): 1. *Complete pooling*: Ignore dependency in data (often not appropriate, results likely biased, not trustworthy) 2. *No pooling*: Two step procedure. 1. Separate data based on factor producing dependency and calculate separate statistical model for each subset. 2. Analysis of distribution of estimates from step 1. (Prone to overfitting which decreases precision of parameter estimates; estimation error accumulates in step 2; combination and analysis of ind 3. *Partial pooling*: Analyse data jointly while taking dependency into account (gold standard, e.g., mixed models, hierarchical models) --- ### References Statistical Modeling: - John Fox and Sanford Weisberg (2011). *An R Companion to Applied Regression, Second Edition.* Thousand Oaks CA: Sage. URL: http://socserv.socsci.mcmaster.ca/jfox/Books/Companion - Gelman, A., & Hill, J. (2007). *Data analysis using regression and multilevel/hierarchical models.* Cambridge; New York: Cambridge University Press. - Russell V. Lenth (2016). Least-Squares Means: The R Package lsmeans. *Journal of Statistical Software*, 69(1), 1-33. https://doi.org/10.18637/jss.v069.i01 - Cohen, J., Cohen, P., West, S. G., & Aiken, L. S. (2002). *Applied Multiple Regression/Correlation Analysis for the Behavioral Sciences.* New York: Routledge Academic. ### References Example Data: - De Simoni, C., & von Bastian, C. C. (2018). Working memory updating and binding training: Bayesian evidence supporting the absence of transfer. _Journal of Experimental Psychology: General_, 147(6), 829–858. https://doi.org/10.1037/xge0000453 - Skovgaard-Olsen, N., Singmann, H., & Klauer, K. C. (2016). The relevance effect and conditionals. *Cognition*, 150, 26-36. https://doi.org/10.1016/j.cognition.2015.12.017